It does have some powerful options, though, and is completely free (no adware or other annoyances), so if you're interested in creating local copies of websites then it's worth trying. And once a site has been downloaded, WebCopy even generates a visual site map which you can customise and save as an image.Ĭyotek WebCopy has a cluttered interface which can be confusing, especially if you're new to offline browsing. There's a built-in editor to help you create and test them. Cyotek WebCopy supports the use of regular expressions in many areas, for example. If you know what you're doing, though, there are a lot of unusual extras here. PC or offline browsing novices will probably struggle to cope, at least with more advanced sites. The interface doesn't really guide you through the process you have to browse the options yourself, and figure out what to do. Managing these more advanced tasks, and viewing the downloaded site afterwards, isn't as straightforward as it should be. The program can set a custom user agent string, include or exclude particular MIME types from the copy process, and more.

Macintosh Operating Systems and Applications.

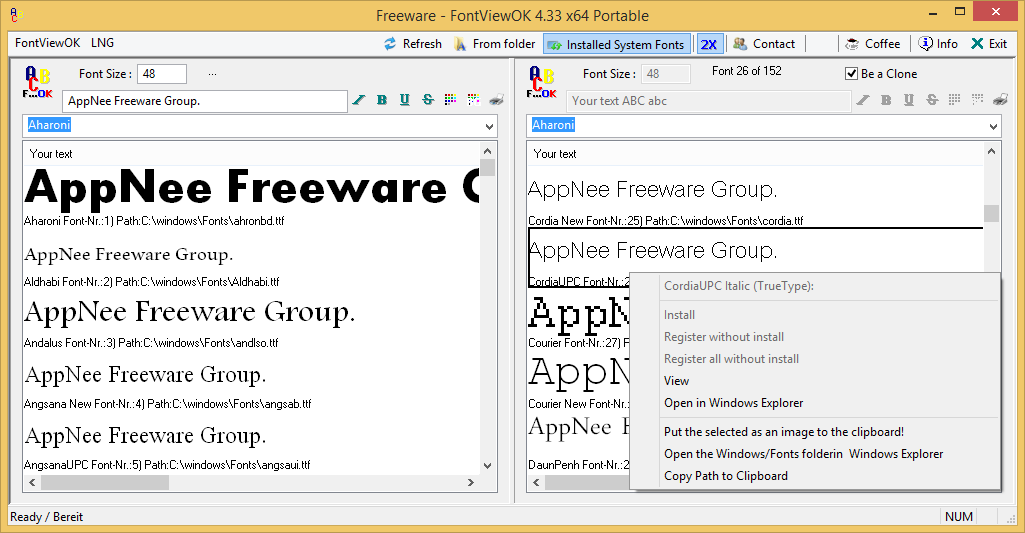

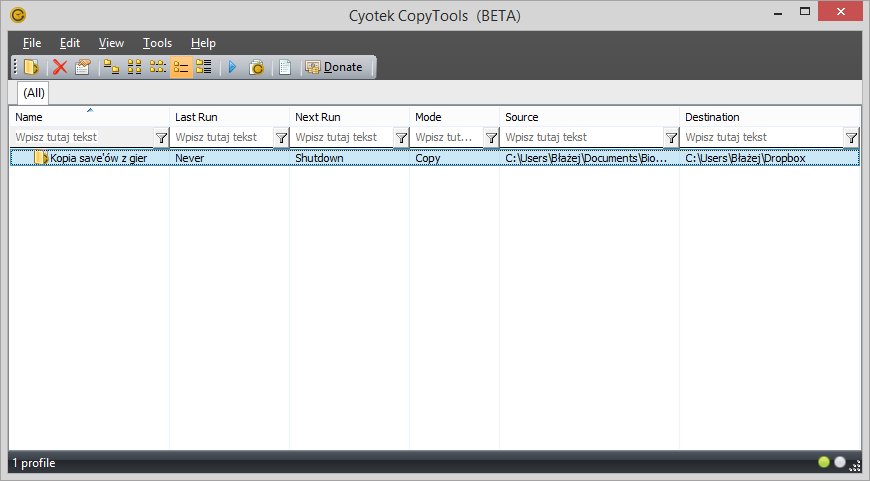

to match all URLs, and the rule options Exclude and Crawl Content. How to upgrade to the latest version of HCL Digital Experience. The first rule instructs WebCopy to not download any files at all to the save folder, but to still crawl HTML files. With todays internet speed and accountabilities, there is not much reason to download an entire website for offline use. You're able to predefine user names and passwords, allowing WebCopy to access protected content. To do an image-only copy of a website, we need to configure a number of rules. You can tell the program to exclude particular sections of the site, for example. If your needs are more complex then WebCopy provides various options to help. And then you're able to view the local copy of any page, and browse it as though you were online. Cyotek WebCopy downloads that page, and any links (within the same domain), then repeats the process until it's done. Grabbing a small and simple site is easy: just enter a URL in the "Website" box, and choose the Copy option. With these rules in place when you copy a website it will scan all HTML files but only download to the save folder those matching the specified extensions.Cyotek WebCopy is a clever tool which copies all or part of a website to your hard drive for offline browsing. Alternatively, you could just have a single rule which matched multiple extensions, for example \.(?:png|gif|jpg). Once a match is made there is no need to continue checking rules, so the Stop Processing option is also set. and then uses the Include option to override the previous rule and cause the file to be downloaded. * to match all URLs, and the rule options Exclude and Crawl Content.Įach subsequent rule adds a regular expression to match a specific image extension, for example \.png. The first rule instructs WebCopy to not download any files at all to the save folder, but to still crawl HTML files. To do an image-only copy of a website, we need to configure a number of rules. This example follows from this and describes how you can use rules to crawl an entire website - but only save images. In our previous tutorial we described how to define rules. Cyotek CopyTools: We use this for offline backups of source code, assets and resources, documents, actually pretty much anything we generate including backing up the backups Macrium Reflect: New for 2021 Not really new, Ive been using this to create DR images of some physical machines for a few years now: Security. Working with JavaScript enabled websites.Using query string parameters in local filenames.Aborting the crawl using HTTP status codes.Saving the current project with a new name.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed